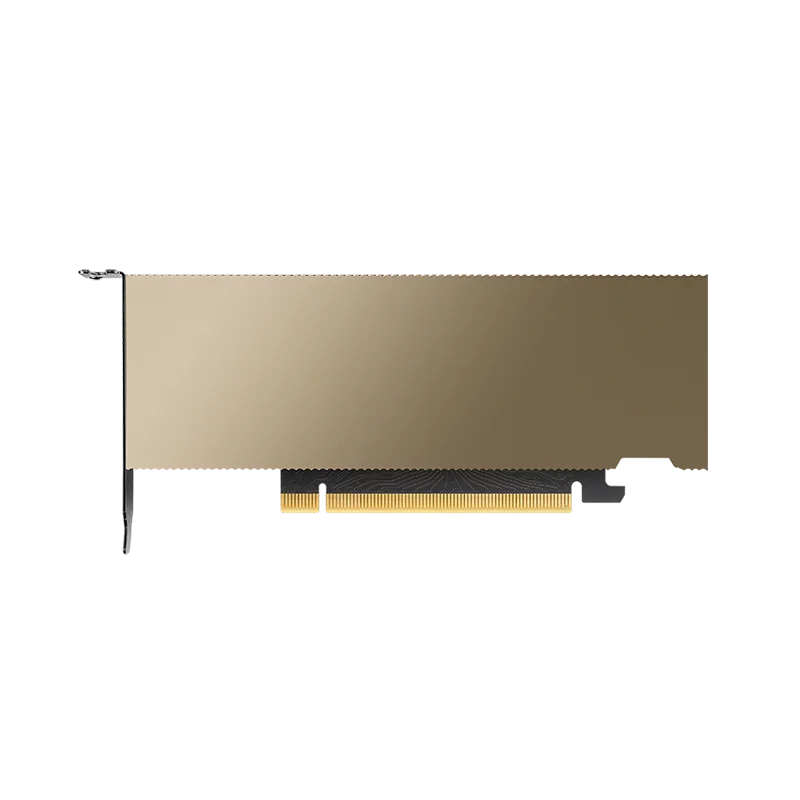

NVIDIA L4

Low-profile, energy-efficient inference accelerator

VRAM

24 GB

Bandwidth

300 GB/s

FP16

242 TFLOPS

TDP

72W

Technical Specifications

| VRAM | 24 GB GDDR6 |

| Memory Bandwidth | 300 GB/s |

| FP16 Performance | 242 TFLOPS |

| BF16 Performance | 242 TFLOPS |

| FP32 Performance | 30.3 TFLOPS |

| INT8 Performance | 485 TOPS |

| TDP | 72W |

| Form Factor | PCIe Gen4 Single-Slot Low-Profile |

| PCIe Interface | PCIe Gen4 x16 |

| Max GPUs per Server | Up to 8 (dense 1U/2U) |

Prices vary with supply and import costs. Contact for current India pricing.

Best For

Not Ideal For

- Large LLMs over 30B parameters (24 GB VRAM limit)

- Training workloads (designed for inference)

- Memory-bandwidth-intensive tasks (300 GB/s is modest)

Overview

The NVIDIA L4 is designed for high-density, energy-efficient inference. At just 72W TDP in a single-slot low-profile form factor, it delivers 242 FP16 TFLOPS while fitting in virtually any server chassis. This makes it ideal for organizations that need to maximize inference throughput per rack unit.

A single 2U server can house up to 8 L4 GPUs consuming just 576W total for the GPU tier. Compare this to 8x H100 SXM at 5,600W. For inference workloads where latency is acceptable and throughput per watt matters, the L4 is unmatched in efficiency.

The 24 GB GDDR6 VRAM handles most production inference models including LLaMA 7B/13B, Stable Diffusion, Whisper, and typical computer vision models. For video AI workloads, the L4 includes hardware decode engines that handle up to 68 concurrent 1080p streams.

Get NVIDIA L4 pricing for your setup

Tell us your workload and cluster size. We'll quote the complete solution including servers, networking, and colocation.