GPU Catalog

NVIDIA & AMD Data Center GPUs

Data center and workstation GPUs for AI, HPC, and rendering. We source from NVIDIA and AMD. Tell us your workload, we'll recommend the right family.

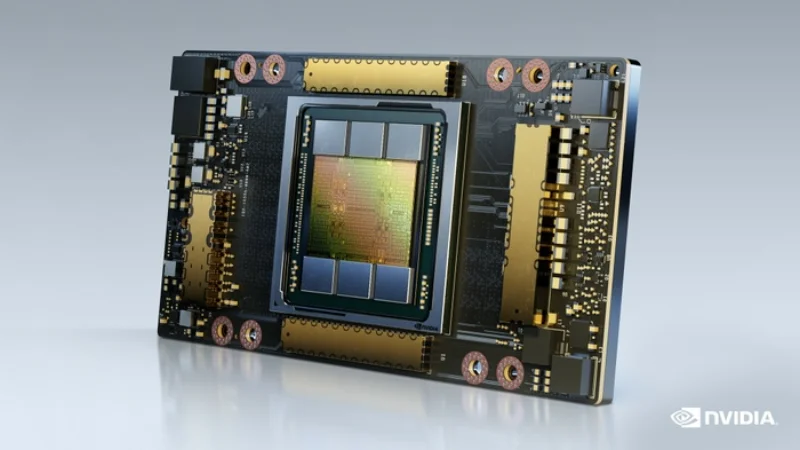

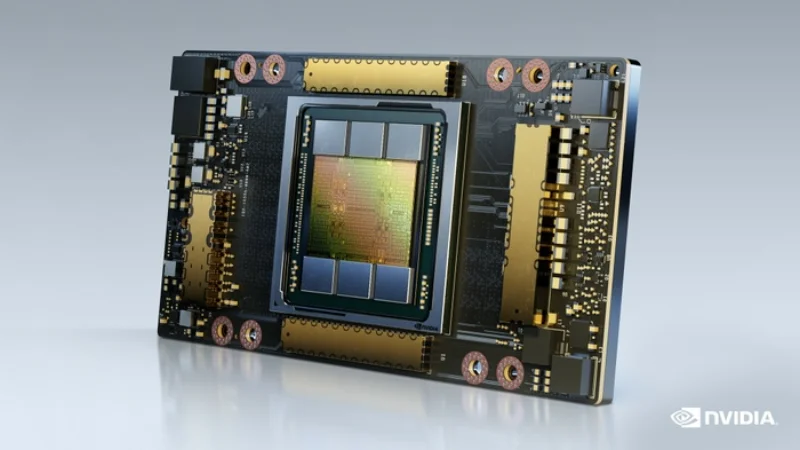

NVIDIA A-Series (A100 / A30)

Ampere · 24–80 GB HBM2e

The NVIDIA A-Series, powered by the Ampere architecture, remains the most widely deployed data- centre GPU family for production AI workloads. The A100 was the first GPU to introduce Multi- Instance GPU (MIG) technology, allowing a single 80 GB A100 to be partitioned into up to seven isolated GPU instances -- each with dedicated compute, memory, and cache resources. This makes the A100 exceptionally efficient for inference-heavy environments where multiple models or tenants share a single physical GPU. In its SXM4 form factor, the A100 80 GB delivers 312 TFLOPS of TF32 Tensor Core performance and connects to peer GPUs over third-generation NVLink at 600 GB/s bidirectional bandwidth. The PCIe variant (80 GB or 40 GB) fits standard 2U and 4U server chassis and is popular for inference servers that need a balance between GPU density and system cost. The A30 (24 GB HBM2e) offers a lower-power-budget option for mainstream inference at 165 W TDP. For organisations in India that have already invested in Ampere-generation infrastructure, RawCompute offers pre-owned and refurbished A100 systems at significantly reduced cost, along with full hardware warranty and NVIDIA-certified driver stacks. These are ideal for teams that need solid training capability without the premium of Hopper-generation hardware.

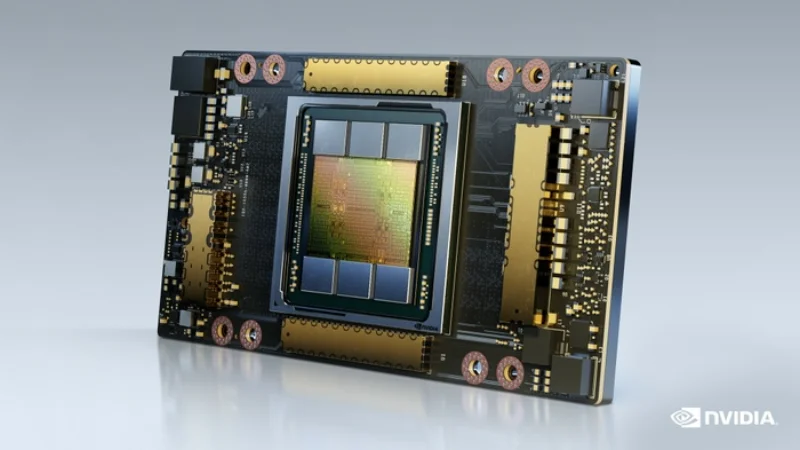

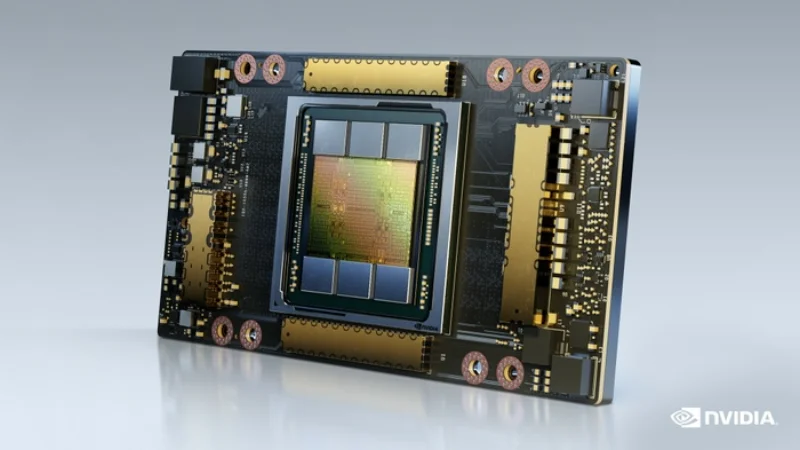

NVIDIA H-Series (H100 / H200)

Hopper · 80 GB HBM3 / 141 GB HBM3e

The NVIDIA H-Series represents the current pinnacle of data-centre AI accelerators built on the Hopper architecture. The H100 SXM5 delivers 3,958 TFLOPS of FP8 Tensor Core performance and features a dedicated Transformer Engine that dynamically switches between FP8 and FP16 precision to maximise throughput for transformer-based models without sacrificing convergence quality. Each H100 SXM5 module connects via fourth-generation NVLink at 900 GB/s bidirectional bandwidth, and an HGX baseboard of eight H100s is interconnected through NVSwitch 3.0, providing every GPU a full 900 GB/s all-to-all fabric -- critical for tensor-parallel and pipeline-parallel training strategies. The H200, its successor on the same Hopper silicon, upgrades memory to 141 GB of HBM3e at 4.8 TB/s, dramatically improving inference batch sizes and KV-cache capacity for long- context LLM serving. Both SKUs support PCIe Gen5 x16 for host attachment and include a hardware- based confidential computing mode via on-die TEE support. For Indian enterprises looking to build sovereign AI training clusters, RawCompute supplies certified HGX H100 and H200 systems from Supermicro, Dell, and ASUS with validated NVIDIA AI Enterprise software stacks. We also offer turnkey InfiniBand NDR fabric design to connect multi-node H100 clusters at 400 Gb/s per port.

AMD Instinct MI300X

CDNA 3 · 192 GB HBM3

The AMD Instinct MI300X is AMD's most ambitious data-centre GPU accelerator, built on the CDNA 3 architecture using a chiplet-based design with up to 12 XCDs (Accelerator Complex Dies) and 8 HBM3 stacks, totalling 192 GB of high-bandwidth memory at 5.3 TB/s aggregate bandwidth. This massive memory capacity -- more than double the H100's 80 GB -- is a decisive advantage for workloads that are memory-bandwidth-bound, such as serving large language models with extended context windows where the KV-cache alone can consume over 100 GB. The MI300X delivers 1,307 TFLOPS of FP16 matrix throughput and 163.4 TFLOPS of FP64, making it the highest FP64 performance GPU available -- an important metric for scientific computing applications like molecular dynamics (GROMACS, LAMMPS) and weather forecasting. AMD's ROCm 6 software stack has matured significantly, with first-class support for PyTorch, JAX, and vLLM, reducing the software-readiness gap with CUDA. An 8-GPU MI300X platform communicates over AMD Infinity Fabric Links at 896 GB/s total interconnect bandwidth across the UBB, comparable to NVLink on HGX systems. For Indian enterprises and research institutions evaluating alternatives to NVIDIA for large-scale AI infrastructure -- whether for cost, supply-chain, or strategic diversification reasons -- RawCompute offers MI300X platforms from Dell, HPE, and Supermicro with validated ROCm-based software stacks and integration support.

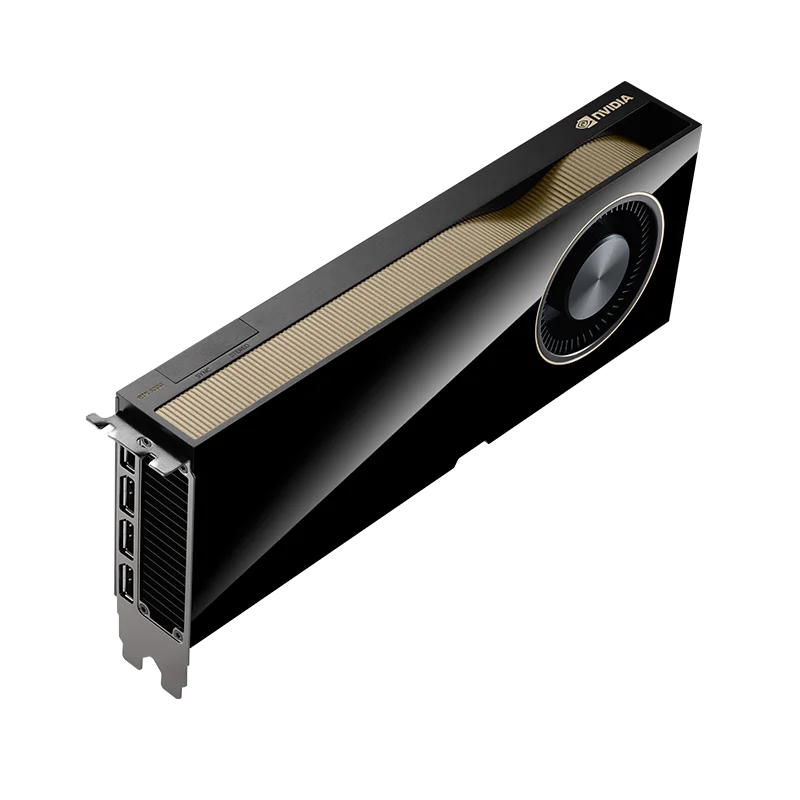

NVIDIA RTX 6000 Ada Generation

Ada Lovelace · 48 GB GDDR6 ECC

The NVIDIA RTX 6000 Ada Generation is the flagship professional-grade GPU, purpose-built for workstation and rack-mounted rendering workloads where certified driver stability, ECC memory, and ISV application compatibility are non-negotiable. It features 18,176 CUDA cores, 568 fourth- generation Tensor Cores, and 142 third-generation RT Cores on the full AD102 die, delivering 91.1 TFLOPS of FP32 shader performance and over 1,400 TFLOPS of FP8 Tensor throughput. The 48 GB of GDDR6 memory with ECC protection ensures data integrity during long-running rendering jobs and safety-critical simulation workloads in aerospace and automotive design. NVLink Bridge support allows two RTX 6000 Ada cards to be paired for 96 GB of unified GPU memory, which is essential for rendering extremely large scenes or running GPU-accelerated computational fluid dynamics solvers. Unlike consumer GeForce cards, the RTX 6000 Ada carries full ISV certification from Dassault Systemes, Autodesk, Siemens, PTC, and Adobe, guaranteeing validated performance across enterprise design applications. RawCompute supplies RTX 6000 Ada workstations from Lenovo ThinkStation, Dell Precision, and HP Z-series lines, as well as rack-mounted rendering nodes for render farm deployments. We also support vGPU licensing for VDI environments where multiple designers share a single physical GPU.

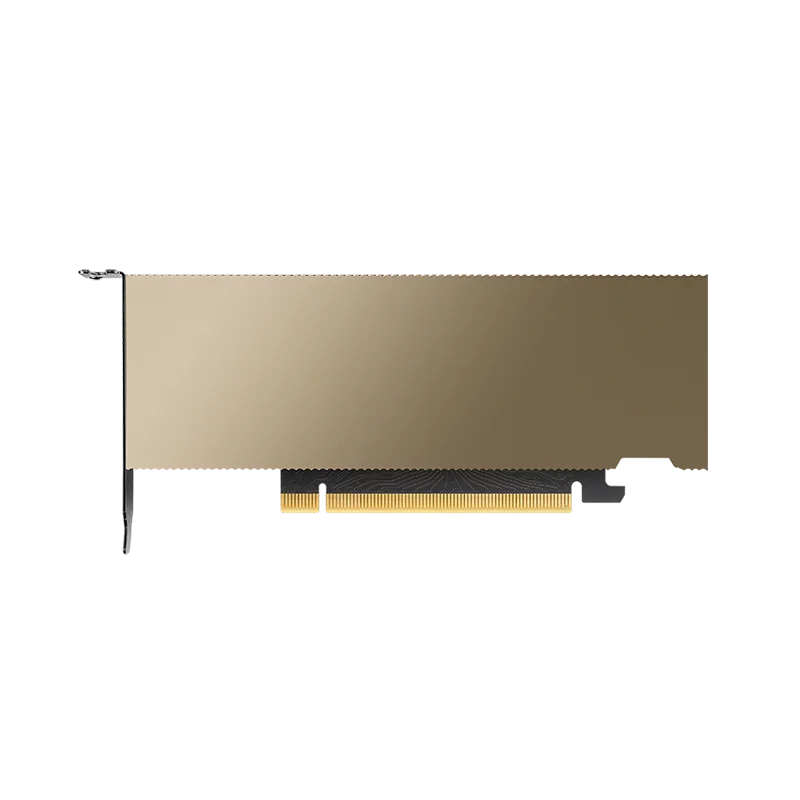

NVIDIA L-Series (L40S / L4)

Ada Lovelace · 24–48 GB GDDR6X

The NVIDIA L-Series brings the Ada Lovelace architecture -- with its fourth-generation Tensor Cores and third-generation RT Cores -- into a power-efficient data-centre form factor optimised for inference and media workloads. The L40S ships with 48 GB of GDDR6X, delivers 362 TFLOPS of FP8 Tensor performance, and draws just 350 W, making it a compelling option for dense inference servers where HBM-based GPUs are overkill. Its standout feature is hardware-accelerated video encode/decode (three NVENC / three NVDEC engines) plus AV1 encode support, which makes it the go-to choice for AI-powered video pipelines -- think real-time content moderation, automated subtitling, and live-stream object detection. The L4 is the low-profile, 72 W single-slot variant with 24 GB GDDR6X that fits into standard 1U and 2U server chassis without any PCIe riser or additional power connectors. Despite its compact size, the L4 delivers 120 TFLOPS of INT8 Tensor throughput, making it remarkably efficient for transformer inference at the edge or in space-constrained colocation racks. RawCompute stocks both L40S and L4 SKUs from Supermicro and Dell, and can configure multi-GPU inference servers with up to eight L4 accelerators in a single 2U chassis for maximum tokens-per-watt efficiency.

All GPU Models

Individual specs, use cases, and pricing for every GPU we supply.

NVIDIA H100 PCIe

Hopper80 GB HBM3 · 756.5 FP16 TFLOPS

View specs

NVIDIA H100 SXM

Hopper80 GB HBM3 · 989.4 FP16 TFLOPS

View specs

NVIDIA H200

Hopper141 GB HBM3e · 989.4 FP16 TFLOPS

View specs

NVIDIA L4

Ada Lovelace24 GB GDDR6 · 242 FP16 TFLOPS

View specs

NVIDIA L40S

Ada Lovelace48 GB GDDR6X · 733 FP16 TFLOPS

View specs

NVIDIA RTX 6000 Ada

Ada Lovelace48 GB GDDR6 ECC · 686.1 FP16 TFLOPS

View specs

NVIDIA A100 40GB

Ampere40 GB HBM2e · 624 FP16 TFLOPS

View specs

NVIDIA A100 80GB

Ampere80 GB HBM2e · 624 FP16 TFLOPS

View specs

NVIDIA A30

Ampere24 GB HBM2e · 165 FP16 TFLOPS

View specs

AMD Instinct MI300X

CDNA 3192 GB HBM3 · 1,307.4 FP16 TFLOPS

View specs

NVIDIA V100

Volta32 GB HBM2 · 125 FP16 TFLOPS

View specsWhich GPU for your workload?

SXM vs PCIe. What's the difference?

SXM GPUs connect via NVLink with significantly higher bandwidth between GPUs. Essential for multi-GPU training workloads like LLMs. PCIe GPUs are more widely compatible and cost-effective for single-GPU inference and rendering tasks. Not sure which you need? Describe your workload and we'll recommend.

Not sure which GPU? Describe your workload

We'll recommend the right GPU family and quote within 24 hours.